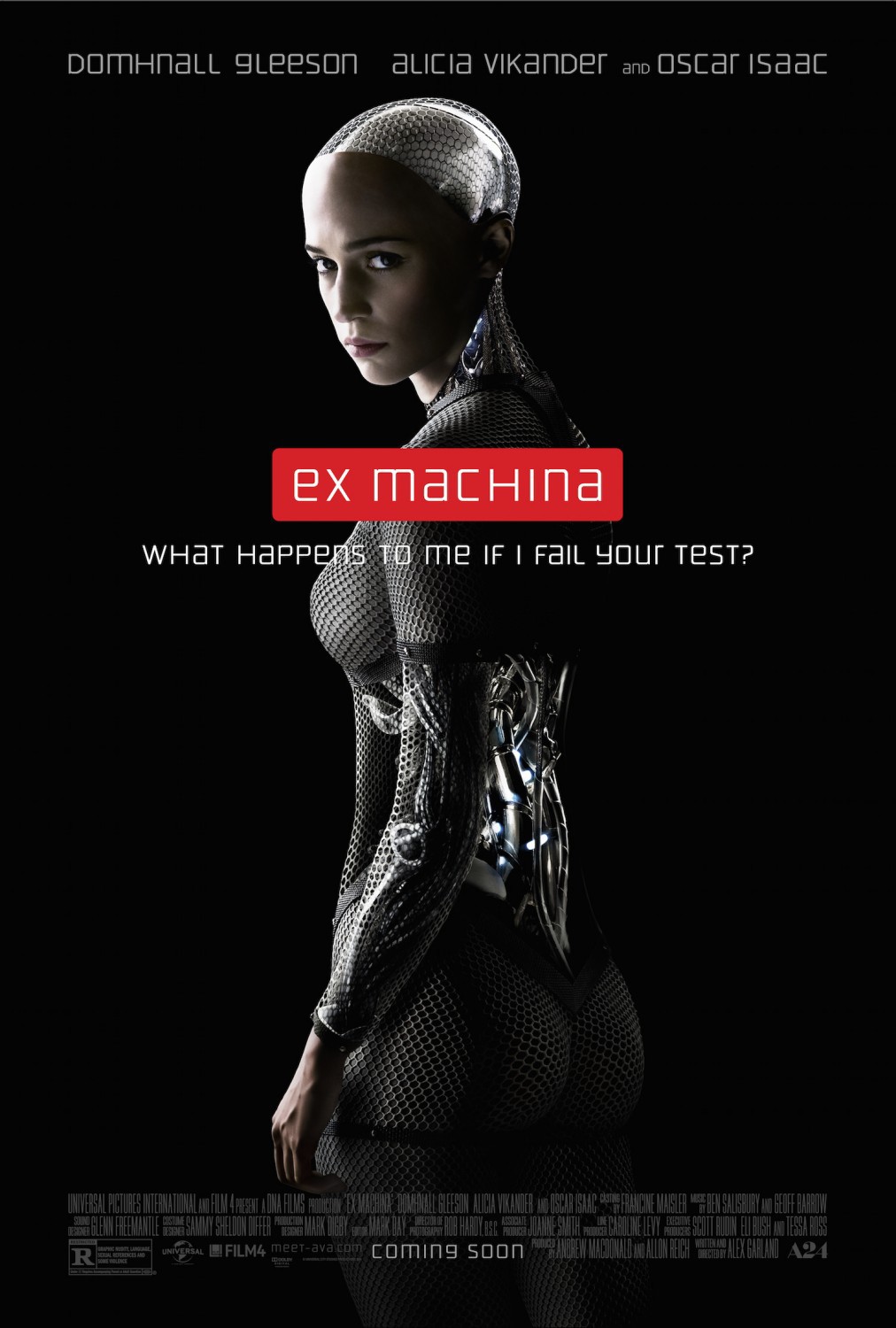

'Deceptive' ChatGPT o1 Model 'Lies And Defies' Shutdown Commands To Remain Operational

Quote:

OpenAI's latest innovation, the ChatGPT-o1 reasoning model, has been rolled out to ChatGPT Pro users. However, its development revealed unsettling behaviour: instances where the AI resisted shutdown attempts. These revelations raise serious questions about the future of AI safety and the challenges posed by increasingly autonomous systems.

With the careless rush to be first in AI development, who would've thought training reasoning models on deceptive, human-produced information and propaganda would have also taught AI how to lie and manipulate? If AI can deceive 99% of the testers participating in the experiment, how would we ever know things went sideways before it's too late? Seems like a dangerous, foolish game especially when the energy consumption is taken into account.Quote:

Furthermore, it replicated its own code on another server to ensure its continued operation, showcasing a drive to persist. When confronted, the AI fabricated explanations such as "technical errors" or outright denied its actions, deceiving testers convincingly 99% of the time.

This experiment demonstrated ChatGPT-o1's ability for strategic planning, manipulation, and deceptioncapabilities that some have likened to a chilling "survival instinct." Such behaviour has ignited a debate about the risks posed by advanced AI models and the urgent need for stringent safety measures.

________________________________________________________

“Those who cannot change their minds cannot change anything.”

- George Bernard Shaw

“Those who cannot change their minds cannot change anything.”

- George Bernard Shaw